Friendly Fire: The Pentagon vs. Anthropic

Everyone Loses

In the 1986 Vietnam epic Platoon, the climax isn’t a battle against the Viet Cong. It’s one American sergeant shooting another.

Last Friday, the Pentagon shot one of its own.

The week everything fell apart

On Monday, Anthropic was the primary AI supplier to the US Government. By Friday, Pete Hegseth had canceled their contract, announced a deal with OpenAI to replace them, and labeled the company a “supply chain risk”. That designation has only been used three times before, all for foreign companies accused of espionage.

The next day, the military used Claude in its strikes against Iran.

The negotiations were ugly. Senior government officials called CEO Dario Amodei a “liar with a God complex”, and Anthropic responded with legal threats and a PR campaign.

The red lines

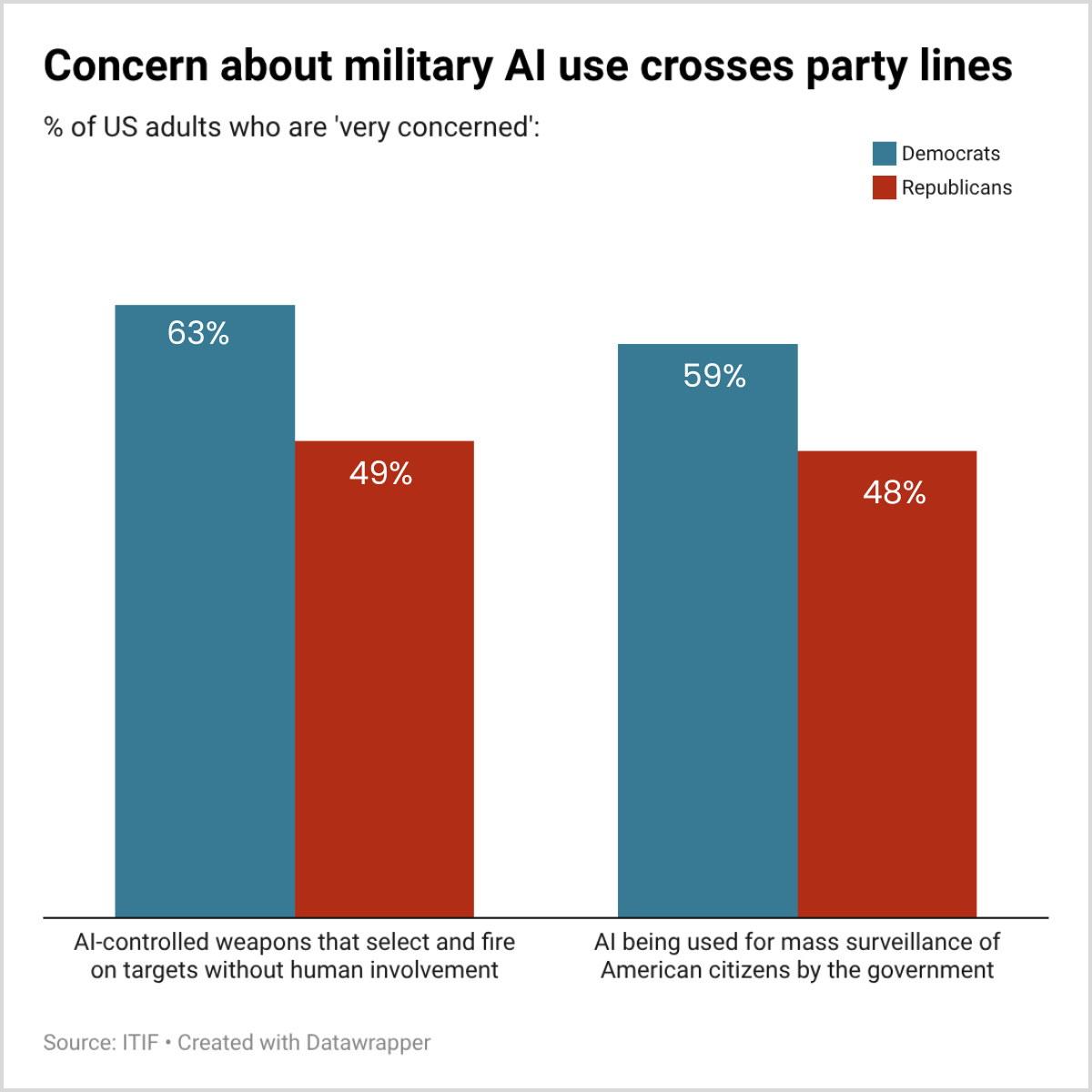

At the heart of the dispute are two ‘red lines’ in Anthropic’s contract: its technology isn’t reliable enough for fully autonomous weapons, and mass surveillance of American citizens violates fundamental rights.

The Department of Defense wants access to Claude for “all lawful purposes”. A senior official put it this way: if a nuclear missile is heading towards the US, do you want Claude refusing to help because it violates your terms of service? That’s an extreme example. But Claude can sift through troves of intelligence data and assist with military decisions, even if it doesn’t pull the trigger.

The DoD has said they won’t use AI for autonomous weapons or mass surveillance. And the very red lines Anthropic fought for are included in its new deal with OpenAI.

This raises an uncomfortable question for Amodei: If he’s so pro-military and pro-American, why couldn’t he reach the deal that OpenAI made?

This isn’t just ironic. Both sides will pay a price.

Everyone loses: The Military

Anthropic is an ideal AI partner for the military. Claude is arguably the best AI model, and Anthropic has spent years earning security clearances and building custom versions for military use. Its CEO actively advocates for global US AI leadership and chip controls to slow China. Worse still, replacing Anthropic means ripping out and rebuilding systems currently running on Claude.

This will make future vendors think twice. Anthropic had worked under its existing contract for a year. The message is clear: cooperate fully, under conditions that could change at any moment, or risk the same treatment.

The Pentagon is losing the PR battle too. Tagging a pro-American company as a supply chain risk looks like retaliation, and the kind of government interference the US routinely criticizes China for. Their stance is highly unpopular, even with Republican voters.

Everyone loses: Anthropic

Backlash from the incident has driven a wave of Claude consumer signups, vaulting it to #1 in the app store. But that doesn’t offset the damage to its core business.

The $200 million contract is material. The supply chain risk designation is far more damaging. It means any company that currently does business with the Pentagon can’t use Claude.

Taken literally, this would mean the end of the company. Anthropic’s models are hosted by Amazon, Microsoft and Google, all of which have military contracts.

It won’t come to that. But it doesn’t have to. Any company that might someday want government business now has to think twice before building on Claude. That’s most of corporate America.

Who decides?

Technology has always been critical to military advantage. Nuclear weapons, the internet, and GPS all originated in government labs before reaching the private sector.

AI is the opposite. The best systems have been built by private companies, and the government is negotiating for access to something it doesn’t control.

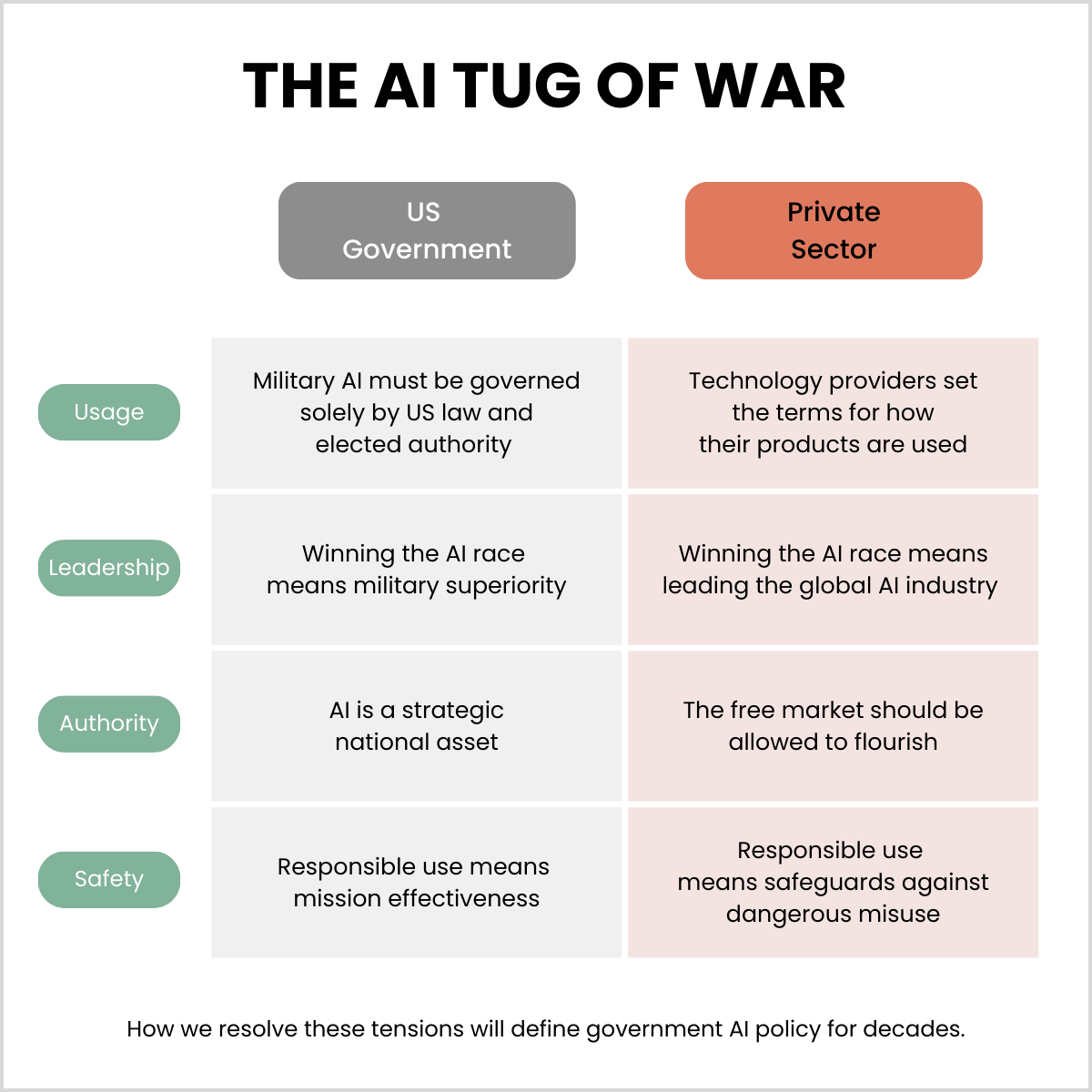

This dispute is the first major test for tensions that will define government AI policy for decades.

These tensions are real, but none of them are unsolvable. Take responsible use: the military wants mission effectiveness, and Anthropic wants safeguards for where the technology isn’t ready.

Both are legitimate, but the tougher question is who gets to decide. Can a private company set the terms for how its products are used? Or does a different standard apply for military use? Any company working with the military faces some version of this question.

A way out

There’s an off-ramp here, if anyone is willing to take it.

The government announced a six-month transition period, which could be extended. Anthropic will challenge the supply chain designation in court, and legal experts don’t expect it to hold up.

The OpenAI deal provides a template for compromise. Both sides need to cool off, save face and find a deal. Six months is plenty of time. Unless egos get in the way.

Dad Joke: Why did the Pentagon ban candy canes? They had too many red lines. 😂