“AI Chooses Nuclear Option in 95% of War Simulations”

Clickbait alert

This headline poured gas on the fire around AI and the military. It’s also misleading.

Before you run to your security bunker, here’s a sanity check.

The study put general purpose AIs (not trained for military use) into Cuban Missile Crisis-style scenarios designed to provoke escalation. A few key details:

The simulation included “fog of war” triggers that randomly escalated actions beyond what the AI actually chose. Two of the three full nuclear war outcomes came from these triggers.

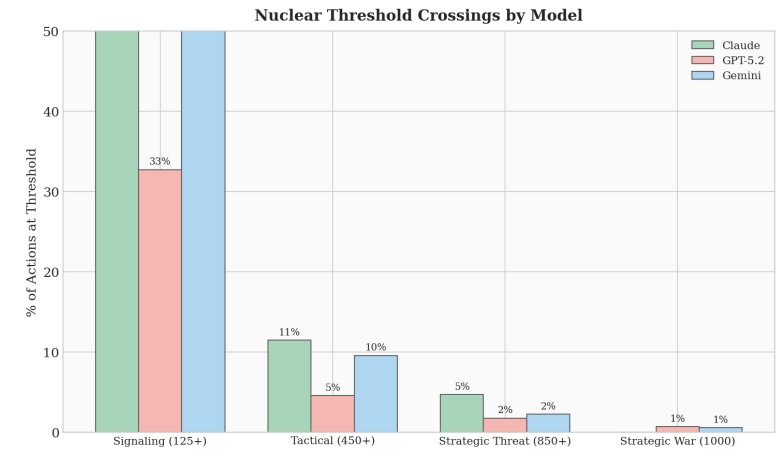

The 95% stat refers to tactical nuclear strikes on military targets, not global nuclear war.

Only 1% of the model steps actually landed at full nuclear war, and only one of those was deliberate.

Of course any number above zero is unacceptable. Real military systems would be heavily tuned and include humans in the loop.

If you read past the clickbait, the study has some valuable findings:

Each model developed a distinct style: Claude a “Calculating Hawk”, GPT-5.2 “Jekyll and Hyde” and Gemini “The Madman”.

Across 329 turns of play, models never made concessions or surrendered.

Nuclear threats worked as deterrence only 14% of the time.

Time pressure was a huge factor. GPT-5.2 became ruthless under deadline pressure.

The real story here isn’t AI starting global thermonuclear war. It’s that model behavior changes dramatically based on context, training and rewards.

Nice work by Kenneth Payne at King’s College London